Bing launched an AI Performance report inside Webmaster Tools earlier this month. We pulled our data the same day.

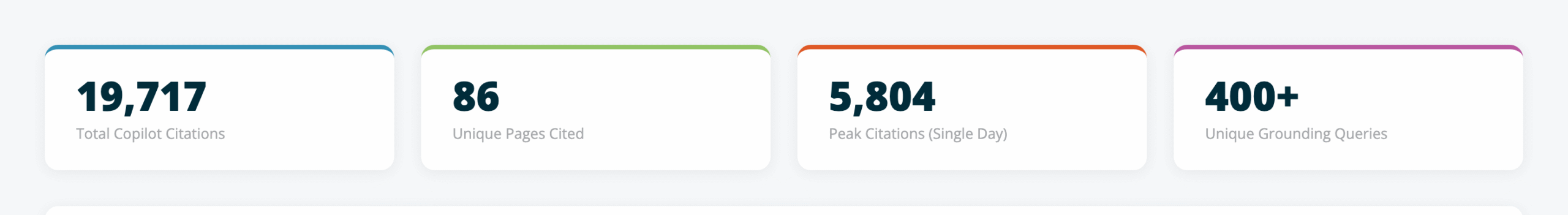

91 days of Copilot citation data. 19,717 total citations across 86 pages. One page accounting for 69% of all of them.

We’ve been tracking AI search visibility for clients using Scrunch and our AI Grader for months. But this is different. This is Microsoft showing us exactly how often — and why — Copilot pulls our content as a source when generating answers.

The data is early, imperfect, and worth looking at closely. You can explore the full interactive dashboard or read on for the highlights.

What the AI Performance Report Shows

Microsoft released this as a public preview in February 2026. Anyone with a verified site in Bing Webmaster Tools can access it.

You get three data exports:

- Daily overview — total citations and number of unique pages cited, by day

- Page-level stats — which URLs get cited and how often

- Grounding queries — the retrieval queries that triggered citations

No API access yet. Fabrice Canel from Microsoft confirmed on X that API support is on their backlog but didn’t give a timeline. For now, it’s CSV exports from the dashboard.

Our Numbers

We pulled 91 days of data for searchinfluence.com, covering November 12, 2025 through February 10, 2026.

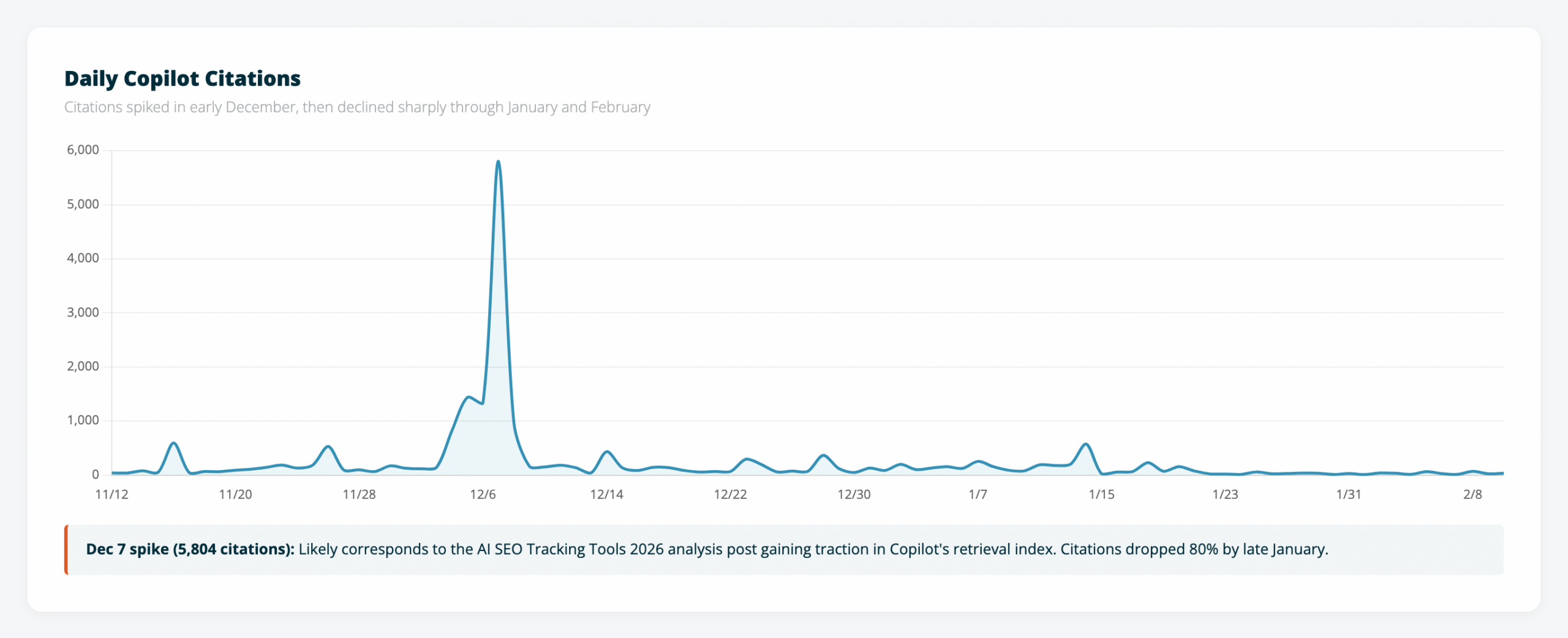

The timeline tells a simple story: citations spiked hard in early December, then fell off.

December 7 hit 5,804 citations in a single day. That spike almost certainly corresponds to our AI SEO Tracking Tools 2026 analysis gaining traction in Copilot’s retrieval index. By late January, daily citations had dropped below 50.

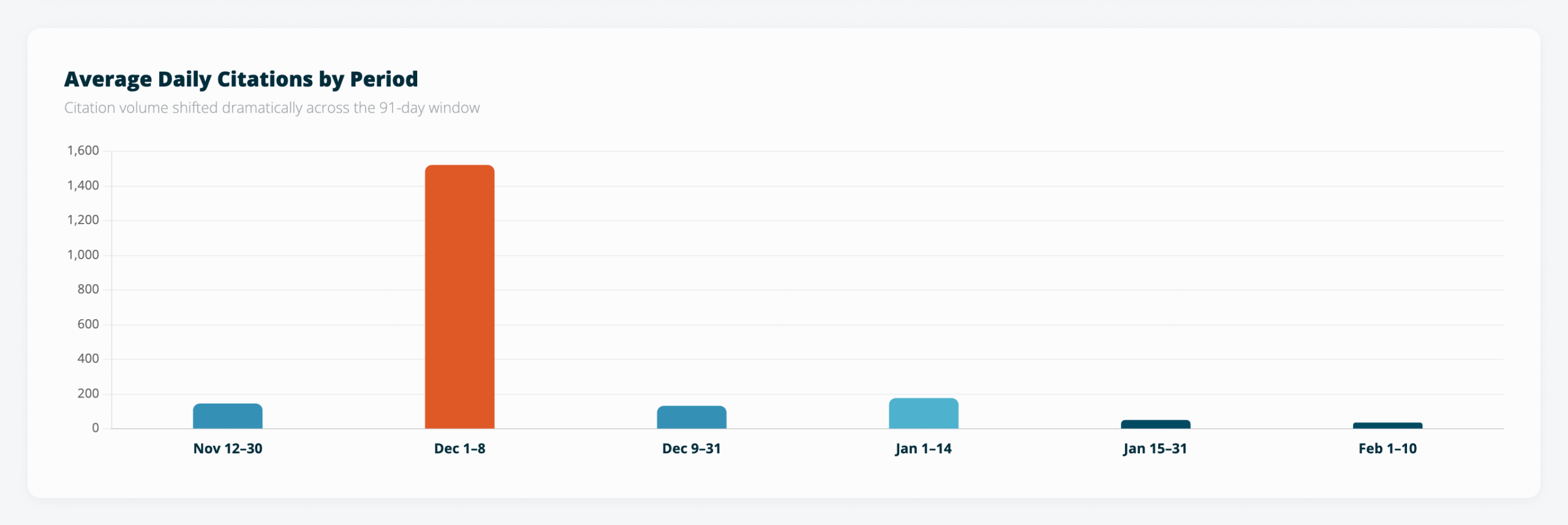

The period breakdown makes the decline even clearer. Dec 1-8 averaged 1,520 citations per day. February: 34. That’s a 97% drop in two months.

A few possible explanations: the analysis was written for a specific moment in time and may be aging out of Copilot’s freshness window, new competing content entered Bing’s index, or Microsoft changed how Copilot’s retrieval weights sources. We’re still looking into it.

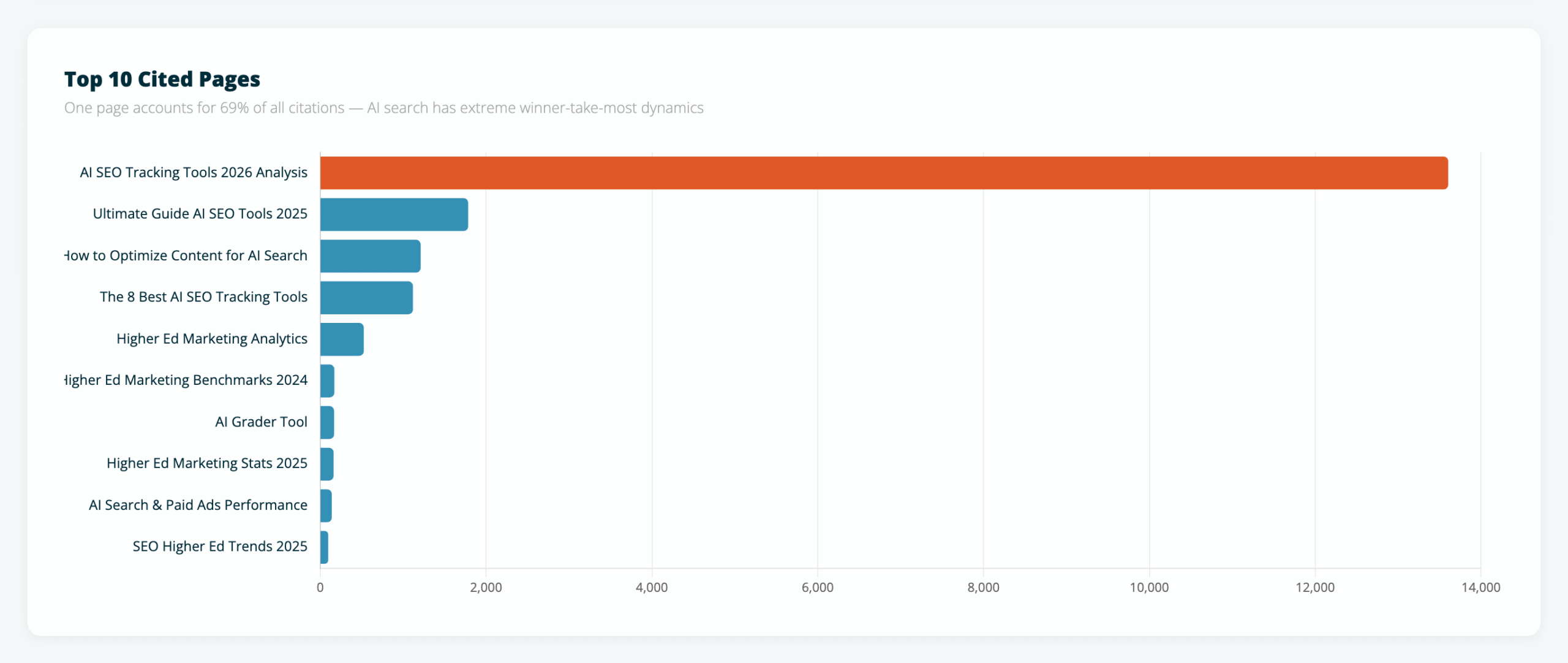

One Page Captures Almost Everything

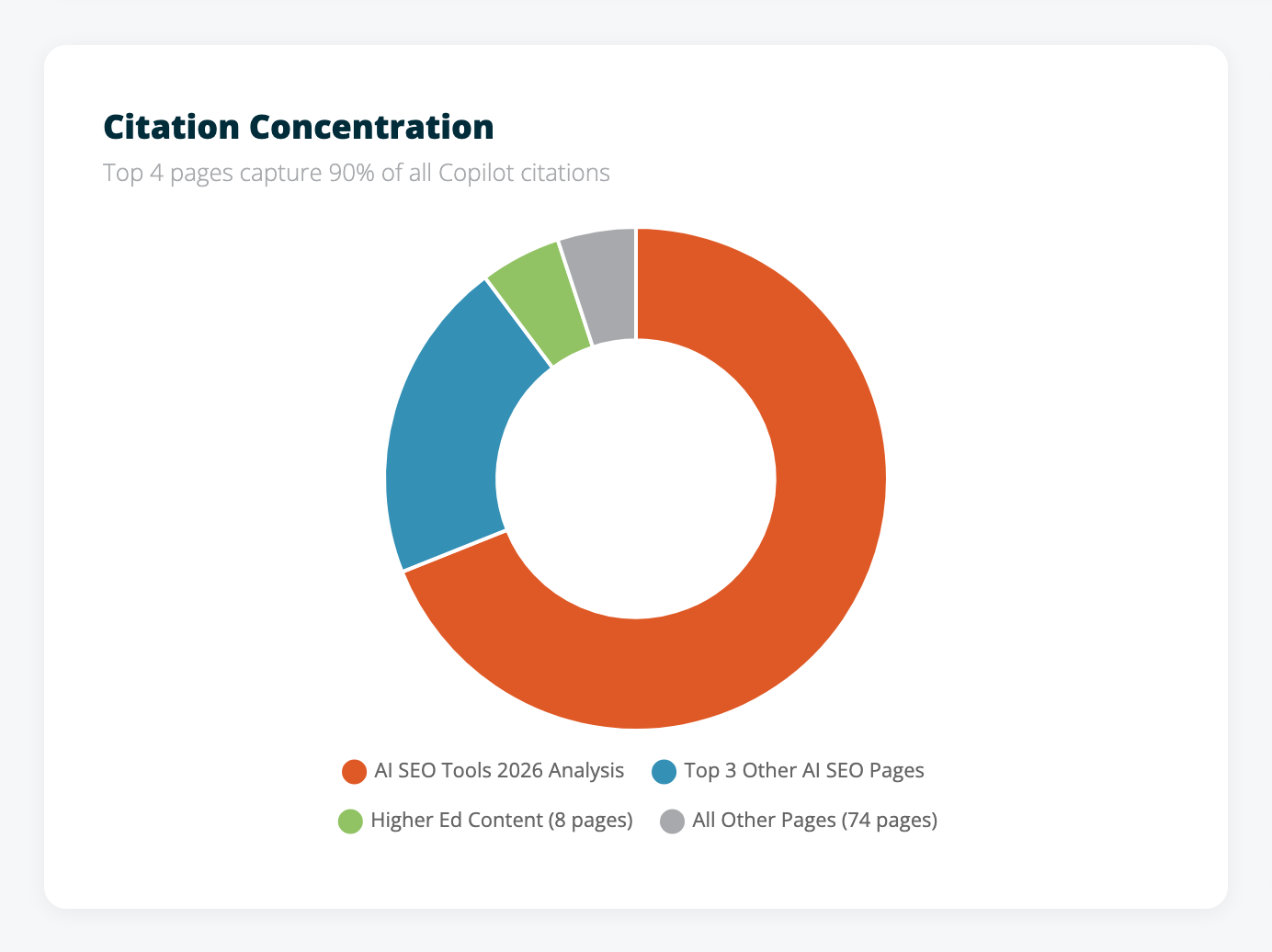

Of the 86 pages Copilot cited across the full period, one captured 69% of all citations.

The top four pages — all AI SEO content — accounted for 90% of total citations. Everything else on the site combined makes up the remaining 10%.

That concentration is more extreme than what we see in traditional search. Google distributes traffic across many pages because users click through a list of results. AI search works differently — it picks one or two sources to ground its answer, and those sources absorb almost everything.

Building deep authority on your strongest topics matters more than spreading thin across many. In AI search, being the second-best resource on a topic might mean getting zero citations.

The Grounding Queries Are the Most Useful Part

The third export — grounding queries — is where we found the most actionable data. It also revealed something about how Copilot’s retrieval system works under the hood.

These queries aren’t what users typed into Copilot. They’re what Copilot’s retrieval system searched for internally when it needed a source to ground its answer.

Look at these examples. Nobody types queries like this into a search box:

- “accuracy of AI SEO GEO platforms tracking position in AI shopping guides”

- “AI search optimization GEO platforms competitor tracking pricing features positioning”

- “push data to analytics platforms or tag managers from AI search optimization GEO platforms”

Those read like machine-generated retrieval queries — Copilot decomposing a user’s conversational question into keyword-dense search queries optimized for Bing’s index.

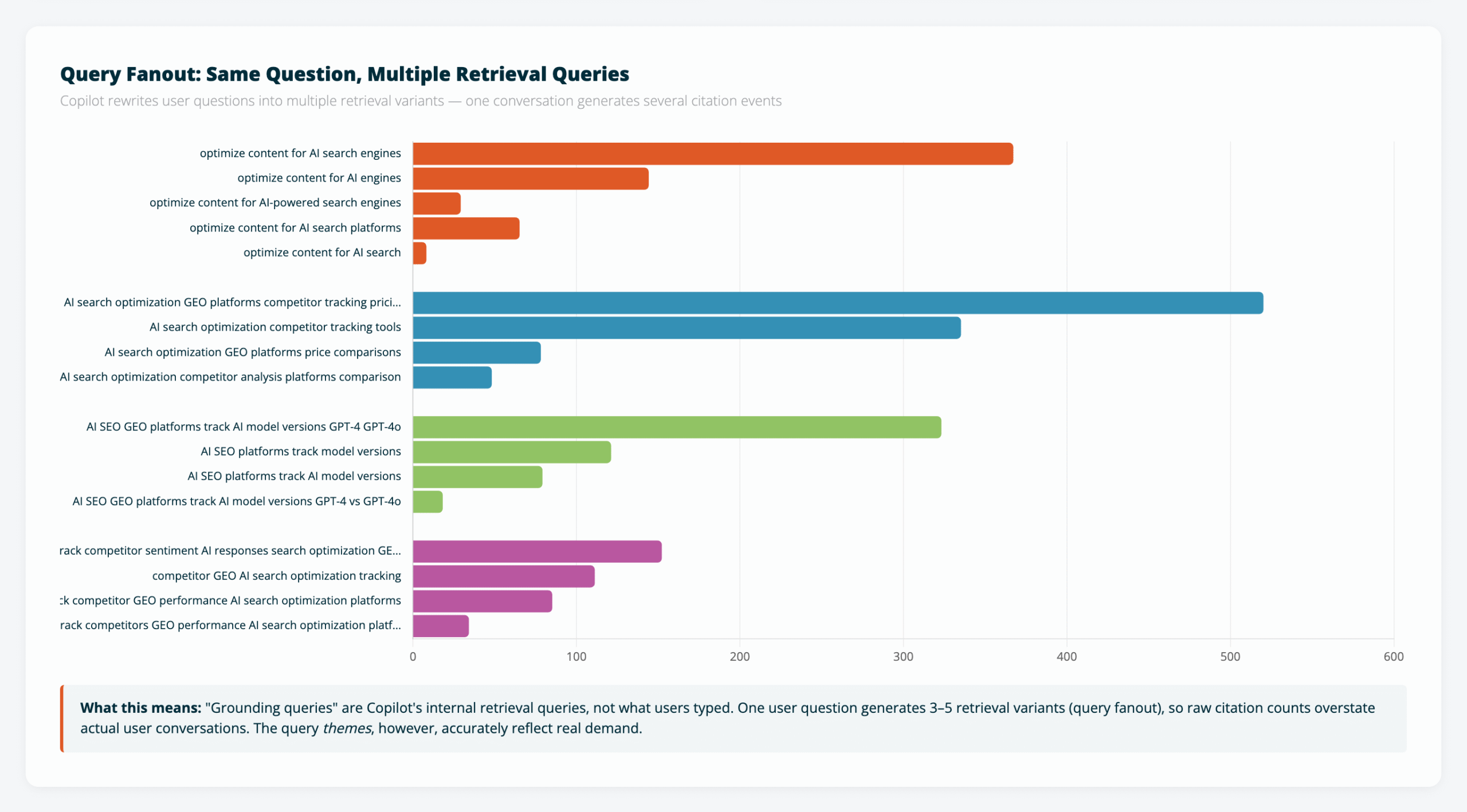

Then there’s query fanout. Same user question, multiple retrieval variants:

The “optimize content for AI search” cluster shows five variations of the same query. “Track AI model versions” shows four. Same intent, rephrased to catch different documents in the index.

This matters for interpreting the numbers. One user conversation likely generates 3-5 citation events through this fanout process. So our “19,717 citations” probably represents closer to 4,000-6,000 actual user conversations. The raw numbers are inflated by the retrieval architecture itself.

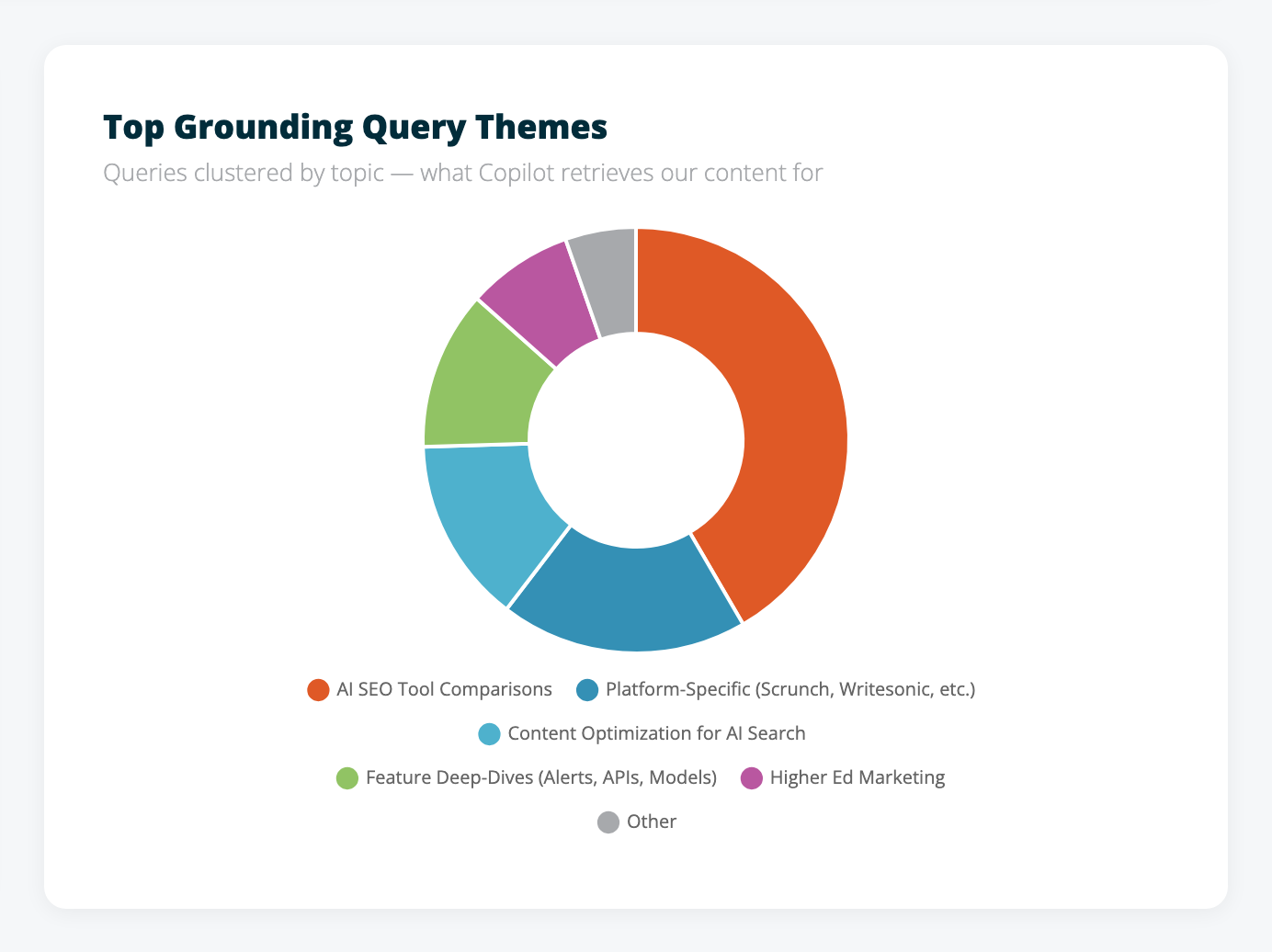

But the query themes are accurate. Over 400 unique grounding queries, clustered into clear topic areas:

AI SEO tool comparisons dominate — pricing, features, platform coverage, specific vendor evaluations. Higher ed marketing shows up as a secondary cluster. Both line up exactly with the content areas where we’ve invested the most over the past year.

What This Means for Content Strategy

Four things stood out from the data.

Structured comparison content earns citations. The page capturing 69% of all citations is a detailed tool-by-tool comparison with pricing, features, trade-offs, and named vendors. AI retrieval systems need specific, structured data to ground their answers. High-level overviews without specifics don’t get pulled in.

Grounding queries are a new form of keyword research. These aren’t the same queries that show up in Google Search Console. They represent what AI retrieval systems search for when answering user questions — a different target than traditional SEO keywords. If you have access to this data, use it to find content gaps and understand exactly what people are asking AI about your topic areas.

AI systems cite a narrow set of pages. Even on days with 5,000+ citations, only 15-18 unique pages got referenced. Copilot picks a small number of authoritative sources rather than pulling from a wide set. Depth beats breadth.

Citation decay is real and fast. Our 97% decline from December to February suggests either content freshness matters in AI retrieval, competitive content displaced us, or both. Publish-and-forget doesn’t work for AI visibility, just like it doesn’t work for traditional SEO. Probably more so.

What We Can’t See Yet

An honest look at the gaps, because there are several.

This is Copilot only. No equivalent data exists yet from ChatGPT, Perplexity, Gemini, or Google AI Overviews. The query themes likely transfer across platforms — people ask similar questions regardless of which AI they use — but citation volumes could look very different elsewhere.

No click-through data. Citations don’t equal traffic. We don’t know how many users clicked through from a Copilot answer to our site versus just reading the AI-generated response. Microsoft may add this metric later, but right now we can measure AI visibility without measuring engagement.

No competitive view. We can see our own citations but not what other sites Copilot cited alongside ours for the same queries. Knowing who else gets cited — and for which queries — would make this data significantly more useful.

The data is still in preview. Microsoft has said more data is coming throughout 2026. What we have now is a starting point.

What We’re Doing With This

We’re using the grounding queries to map content gaps. 400+ queries show us exactly what Copilot users are asking about our topic areas. Where our existing content doesn’t fully answer those queries, that’s where we’re focusing next.

For clients, we’re adding Copilot citation metrics to monthly reports. “Your site was cited X times in AI search this month across Y pages” is a concrete number. Most of the industry is still guessing about AI visibility. This is actual measurement, even if it’s limited to one platform.

And we’re layering this data alongside what we already track through Scrunch (AI visibility across ChatGPT, Perplexity, and other platforms) and our AI Grader (content readiness scores). Three data sources covering three layers: content quality, AI visibility, and actual citations. Together, they give us the closest thing to a full picture of AI search performance that exists right now.

Check Your Own Data

If you want to see your Copilot citation numbers, verify your site in Bing Webmaster Tools and look for the AI Performance section. The report is available for all verified sites.

Want to see how your content scores for AI search readiness right now? Try the AI Grader — it takes about 30 seconds.

![30+ AI Search in Higher Education Stats [2026]](https://townsend.bunksite.com/wp-content/uploads/2026/01/The-State-of-AI-Search-in-Higher-Education-200-x-200-px.png)